Disclaimer: NourishNot is an independent app developed solely by me. The views and content here are separate from any content related to my other business activities.

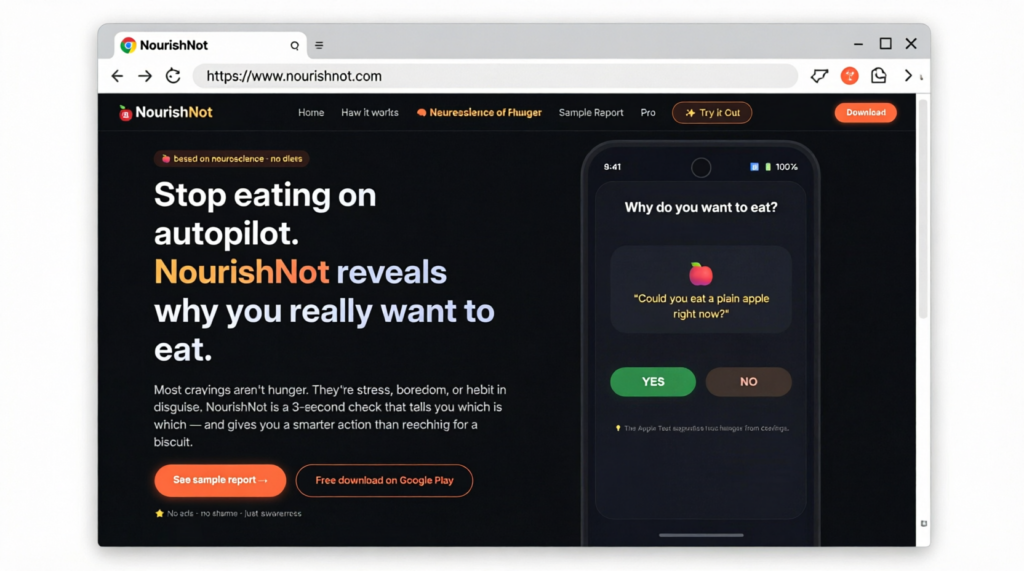

Cravings aren’t a lack of willpower. They’re brain signals shaped by habit, stress, and reward. Here’s how NourishNot uses neuroscience to quiet those urges for real, lasting weight loss.

The Hidden Driver of Overeating

Most diets focus on what you eat. But the real question is why you eat when your body doesn’t need fuel.

Neuroscience shows that eating behaviour is governed by several brain circuits:

-

The reward system (dopamine) – makes high‑calorie foods feel pleasurable.

-

The habit circuit (basal ganglia) – turns repeated eating into automatic behaviour.

-

The stress response (cortisol, amygdala) – drives emotional eating and cravings for sugar/fat.

These systems evolved to keep us alive during scarcity. But in today’s environment of constant food cues, they become overactive – leading to weight gain that feels out of control.

How NourishNot Works with Your Brain, Not Against It

Most weight loss methods try to suppress hunger or rely on willpower. That rarely works long‑term because you’re fighting your own neurobiology.

NourishNot takes a different approach: neuromodulation through awareness and small, targeted actions.

The app helps you:

-

Identify the trigger – Is it true hunger, a habit loop, or an emotional cue?

-

Weaken the signal – Using evidence‑based techniques like urge surfing, stimulus control, and cognitive reframing.

-

Rewire the response – Over time, your brain learns new associations, reducing the power of automatic eating urges.

What the Science Says

Research in Appetite, Neuroscience & Biobehavioral Reviews, and clinical studies on cue exposure therapy and mindful eating shows that you can actually down‑regulate the brain’s response to food cues.

By repeatedly practicing small interventions when an urge appears, you strengthen prefrontal cortex control over the limbic system – meaning you regain choice, not just resistance.

Lasting Weight Loss Without Deprivation

When the brain’s “eat” signals become quieter, you don’t need superhuman willpower. You simply experience fewer, weaker urges. That leads to:

-

Natural portion control

-

Less snacking on autopilot

-

Reduced emotional eating

-

Weight loss that stays off

Start Rewiring Today

NourishNot guides you through daily 2‑minute neuroscience exercises. No meal tracking, no banning foods – just brain training for a healthier relationship with eating.

Open beta coming soon!

This post is part of the NourishNot blog. We translate neuroscience into daily actions for lasting weight loss.